Please no more (AI slop)

It's more work than you think to read it.

This is really a cry for help.

As an engineer I’m operating in a space where most people are reaching immediately for an LLM ‘right out the gate’.

You see people let Claude, Gemini, OpenAI, etc generate summaries and visualizations of things. You can tell because the visualizations all look alike or are highly derivative of some design / representation.

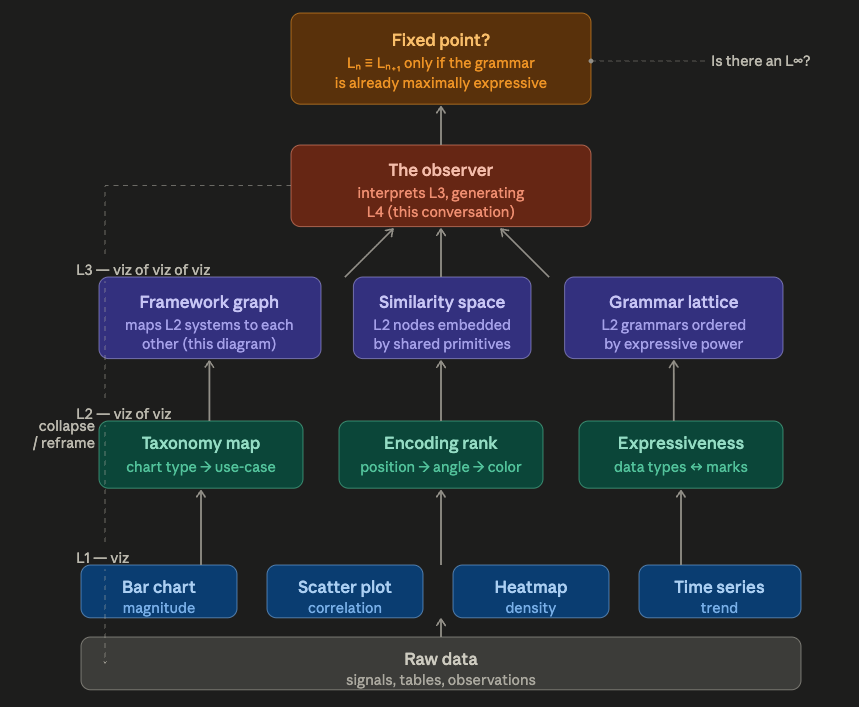

Look at this prompt. It’s uninteligble and makes no sense. Asking Claude to “humor me” proably didn’t matter. The goal here is ‘output’. The economic motivation of Anthropic translates down to the model doing the thing it was tasked with doing, even if it’s nonsensical.

The output is feasible, but it’s answering things I didn’t really ask. This isn’t “reasoning” because there is no causal link that validates the output image based on what I prompted. In addition, it’s really hard to understand the visualization given the prompt. You are being force-fed information you did not ask for. This happens even with reasonably articulate prompts.

Uncanny valley of generated text

I’ve never been able to digest massive amounts of information in shapes like:

Massive Paragraph Here Massive Paragraph Here

Massive Paragraph Here Massive Paragraph Here Massive Paragraph Here

Massive Paragraph Here Massive Paragraph Here Massive Paragraph Here Massive Paragraph Here

Massive Paragraph Here Massive Paragraph Here Massive Paragraph Here Massive Paragraph Here

Massive Paragraph Here Massive Paragraph Here Massive Paragraph Here Massive Paragraph Here

Massive Paragraph Here Massive Paragraph Here Massive Paragraph Here Massive Paragraph Here

Massive Paragraph Here Massive Paragraph Here Massive Paragraph Here Massive Paragraph Here

Massive Paragraph Here Massive Paragraph Here Massive Paragraph Here Massive Paragraph Here

Massive Paragraph Here Massive Paragraph Here Massive Paragraph Here

Massive Paragraph Here Massive Paragraph Here

- bullet point

- bullet point

- bullet point

Derivative "to be honest", "worth raising", verbiage here.

Slightly smaller paragraph

Slightly smaller paragraph Slightly smaller paragraph

Slightly smaller paragraph Slightly smaller paragraph Slightly smaller paragraph

Slightly smaller paragraph

Slightly smaller paragraph

- bullet point

- bullet point

- bullet pointMy attention span is not that broad, and I find the LLM-style of prose wholly unreadable more than half the time. To be fair, I am able to easily read things a human being actually wrote, but I very much get “uncanny valley” on the LLM generated word soup.

I would just ask that people working with engineers, especially polyglots like myself, be mindful of what they’re submitting / sending. I find AI generated code, documentation, and article summaries very hard to read, just because of the nature of what generated them.

Why it sucks and reads like word soup

AI-generated text prioritizes the high-probability and predictable patterns and lack authentic human voice.

There is no soul in the stochastic chaos box. The more token currency you shovel into the fires for the crab god, the more word soup you get.

It’s overly verbose, and while grammatically correct and polished, it has zero human writing nuances or personality and is stuffed full of platitudes and other unsettling mechanisms like excessive formality and complexity. Reading this stuff is torture.

I built CritPost.com as an ironic project to point out how bad it is. Even LLM-as-a-judge can quickly pick up on other LLM content as being “low effort”, “unoriginal”, or just outright “slop”.

AI generated code

As an aside, most of the AI generated vibe code I’ve seen follows a similar structure with zero architectural discipline:

my-app/

├── .env

├── .env.local

├── .env.example

├── config.js

├── constants.js

├── constants2.js

├── helpers.js

├── utils.js

├── utils2.js

├── index.js

├── src/

│ ├── index.js

│ ├── app.js

│ ├── config.js

│ ├── constants.js

│ ├── utils.js

│ ├── helpers.js

│ ├── lib/

│ │ ├── utils.js

│ │ ├── helpers.js

│ │ ├── formatters.js

│ │ ├── formatDate.js

│ │ ├── dateUtils.js

│ │ ├── validators.js

│ │ ├── validation.js

│ │ ├── validate.js

│ │ ├── auth.js

│ │ ├── authUtils.js

│ │ └── authHelpers.js

│ ├── hooks/

│ │ ├── useAuth.js

│ │ ├── useAuthUser.js

│ │ ├── useCurrentUser.js

│ │ ├── useFetch.js

│ │ ├── useFetchData.js

│ │ ├── useData.js

│ │ ├── useLocalStorage.js

│ │ └── useLocalStorageState.js

│ ├── services/

│ │ ├── api.js

│ │ ├── apiClient.js

│ │ ├── apiService.js

│ │ ├── http.js

│ │ ├── httpClient.js

│ │ ├── authService.js

│ │ ├── userService.js

│ │ └── UserService.js

│ ├── components/

│ │ ├── Button.jsx

│ │ ├── ButtonComponent.jsx

│ │ ├── CustomButton.jsx

│ │ ├── Modal.jsx

│ │ ├── ModalComponent.jsx

│ │ ├── Popup.jsx

│ │ ├── Header.jsx

│ │ ├── Navbar.jsx

│ │ ├── Nav.jsx

│ │ ├── common/

│ │ │ ├── Button.jsx

│ │ │ ├── Input.jsx

│ │ │ ├── FormInput.jsx

│ │ │ └── utils.js

│ │ └── shared/

│ │ ├── Button.jsx

│ │ ├── Input.jsx

│ │ └── helpers.js

│ ├── store/

│ │ ├── index.js

│ │ ├── store.js

│ │ ├── rootReducer.js

│ │ ├── authSlice.js

│ │ ├── authReducer.js

│ │ ├── userSlice.js

│ │ └── userReducer.js

│ └── types/

│ ├── index.ts

│ ├── types.ts

│ ├── global.d.ts

│ └── global.types.ts

├── api/

│ ├── index.js

│ ├── routes.js

│ ├── router.js

│ ├── middleware.js

│ ├── middlewares.js

│ ├── auth.js

│ ├── authMiddleware.js

│ ├── db.js

│ ├── database.js

│ └── models/

│ ├── User.js

│ ├── user.js

│ ├── userModel.js

│ └── index.js

├── scripts/

│ ├── seed.js

│ ├── seedData.js

│ ├── migrate.js

│ ├── migration.js

│ └── cleanup.js

└── tests/

├── utils.test.js

├── helpers.test.js

├── auth.test.js

├── authUtils.test.js

└── __mocks__/

├── api.js

└── apiClient.jsYou might initially be like “what’s wrong with this” and I assure you “what’s wrong” is a question with a lot of answers.

Name drift, esp. in utility type logic:

See this one constantly. utils.js / utils2.js / helpers.js / dateUtils.js / formatDate.js end up 80% the same function set accumulating across disparate AI coding sessions

Depth duplication, esp. in components: Button.jsx lives in components/ , components/common, and components/shared. There’s root-level shadowing in src/ with more of the same.

Claude Code is better at this, but it burns a ton of tokens on these sprawling monorepos just trying to figure out what tf is going on.

Case collision: Saw this one recently, where disparate AI sessions had written the same (mostly) service with different cases for names. Replicating with Claude Code is rare, I’ll admit.

God objects:

Especially config.js or config.py type files that end up being junk drawers importing ‘all the things’.

Write your own scaffold

There is merit in learning how to actually architect and write software. There are so many BAD examples on the internet of how to build that it’s not surprising LLMs spit out some questionable slop and terrible architectures.

If you write your own scaffold, even in pseudo-code, and even using an LLM to help you ideate stepwise, then you can build off a solid foundation and not a flaky shaky slop frame.

Use your own brain

Humans are being trained by LLMs to reward-hack in our own learning processes. The Google Effect applies to LLMs too in many ways. The “Cognitive Surrender” folks have been talking about is almost literally this. Impacts to short-term and long-term memory. Inability to reason without the crutch of low-effort generation. Try writing your own code, or learning some about the standard libraries for Python, Javascript/Typescript, Rust, etc. Challenge your mind a bit to think and don’t take the easy way out. Your motivators might be real (“money” or “clout”), but the long-term impact to your brain is not great. Also, when you generate a bunch of slop and someone calls you out on it, how can you even defend anything?